LAST YEAR, THE Japanese company SoftBank opened a cell phone store in Tokyo and staffed it entirely with sales associates named Pepper. This wasn’t as hard as it sounds, since all the Peppers were robots.

Humanoid robots, to be more precise, which SoftBank describes as “kindly, endearing, and surprising.” Each Pepper is equipped with three multidirectional wheels, an anticollision system, multiple sensors, a pair of arms, and a chest-mounted tablet that allows customers to enter information. Pepper can “express his own emotions” and use a 3-D camera and two HD cameras “to identify movements and recognize the emotions on the faces of his interlocutors.”

The talking bot can supposedly identify joy, sadness, anger, and surprise and determine whether a person is in a good or bad mood—abilities that Pepper’s engineers figured would make “him” an ideal personal assistant or salesperson. And sure enough, there are more than 10,000 Peppers now at work in SoftBank stores, Pizza Huts, cruise ships, homes, and elsewhere.

In a less anxious world, Pepper might come across as a cute technological novelty. But for many pundits and prognosticators, he’s a sign of something much more grave: the growing obsolescence of human workers. (Images of the doe-eyed Pepper have accompanied numerous articles with variations on the headline “robots are coming for your job.”)

Over the past few years, it has become conventional wisdom that dramatic advances in robotics and artificial intelligence have put us on the path to a jobless future. We are living in the midst of a “second machine age,” to quote the title of the influential book by MIT researchers Erik Brynjolfsson and Andrew McAfee, in which routine work of all kinds—in manufacturing, sales, bookkeeping, food prep—is being automated at a steady clip, and even complex analytical jobs will be superseded before long. A widely cited 2013 study by researchers at the University of Oxford, for instance, found that nearly half of all jobs in the US were at risk of being fully automated over the next 20 years. The endgame, we’re told, is inevitable: The robots are on the march, and human labor is in retreat.

This anxiety about automation is understandable in light of the hair-raising progress that tech companies have made lately in robotics and artificial intelligence, which is now capable of, among other things, defeating Go masters, outbluffing champs in Texas Hold’em, and safely driving a car. And the notion that we’re on the verge of a radical leap forward in the scale and scope of automation certainly jibes with the pervasive feeling in Silicon Valley that we’re living in a time of unprecedented, accelerating innovation. Some tech leaders, including Y Combinator’s Sam Altman and Tesla’s Elon Musk, are so sure this jobless future is imminent—and, perhaps, so wary of torches and pitchforks—that they’re busy contemplating how to build a social safety net for a world with less work. Hence the sudden enthusiasm in Silicon Valley for a so-called universal basic income, a stipend that would be paid automatically to every citizen, so that people can have something to live on after their jobs are gone.

It’s a dramatic story, this epoch-defining tale about automation and permanent unemployment. But it has one major catch: There isn’t actually much evidence that it’s happening.

IMAGINE YOU’RE THE pilot of an old Cessna. You’re flying in bad weather, you can’t see the horizon, and a frantic, disoriented passenger is yelling that you’re headed straight for the ground. What do you do? No question: You trust your instruments—your altimeter, your compass, and your artificial horizon—to give you your actual bearings, and keep flying.

Now imagine you’re an economist back on the ground, and a panicstricken software engineer is warning that his creations are about to plow everyone straight into a world without work. Just as surely, there are a couple of statistical instruments you know to consult right away to see if this prediction checks out. If automation were, in fact, transforming the US economy, two things would be true: Aggregate productivity would be rising sharply, and jobs would be harder to come by than in the past.

Take productivity, which is a measure of how much the economy puts out per hour of human labor. Since automation allows companies to produce more with fewer people, a great wave of automation should drive higher productivity growth. Yet, in reality, productivity gains over the past decade have been, by historical standards, dismally low. Back in the heyday of the US economy, from 1947 to 1973, labor productivity grew at an average pace of nearly 3 percent a year. Since 2007, it has grown at a rate of around 1.2 percent, the slowest pace in any period since World War II. And over the past two years, productivity has grown at a mere 0.6 percent—the very years when anxiety about automation has spiked. That’s simply not what you’d see if efficient robots were replacing inefficient humans en masse. As McAfee puts it, “Low productivity growth does slide in the face of the story we tell about amazing technological progress.”

Now, it’s possible that some of the productivity slowdown is the result of humans shifting out of factories into service jobs (which have historically been less productive than factory jobs). But even productivity growth in manufacturing, where automation and robotics have been well-established for decades, has been especially paltry of late. “I’m sure there are factories here and there where automation is making a difference,” says Dean Baker, an economist at the Center for Economic and Policy Research. “But you can’t see it in the aggregate numbers.”

Nor does the job market show signs of an incipient robopocalypse. Unemployment is below 5 percent, and employers in many states are complaining about labor shortages, not labor surpluses. And while millions of Americans dropped out of the labor force in the wake of the Great Recession, they’re now coming back—and getting jobs. Even more strikingly, wages for ordinary workers have risen as the labor market has improved. Granted, the wage increases are meager by historical standards, but they’re rising faster than inflation and faster than productivity. That’s something that wouldn’t be happening if human workers were on the fast track to obsolescence.

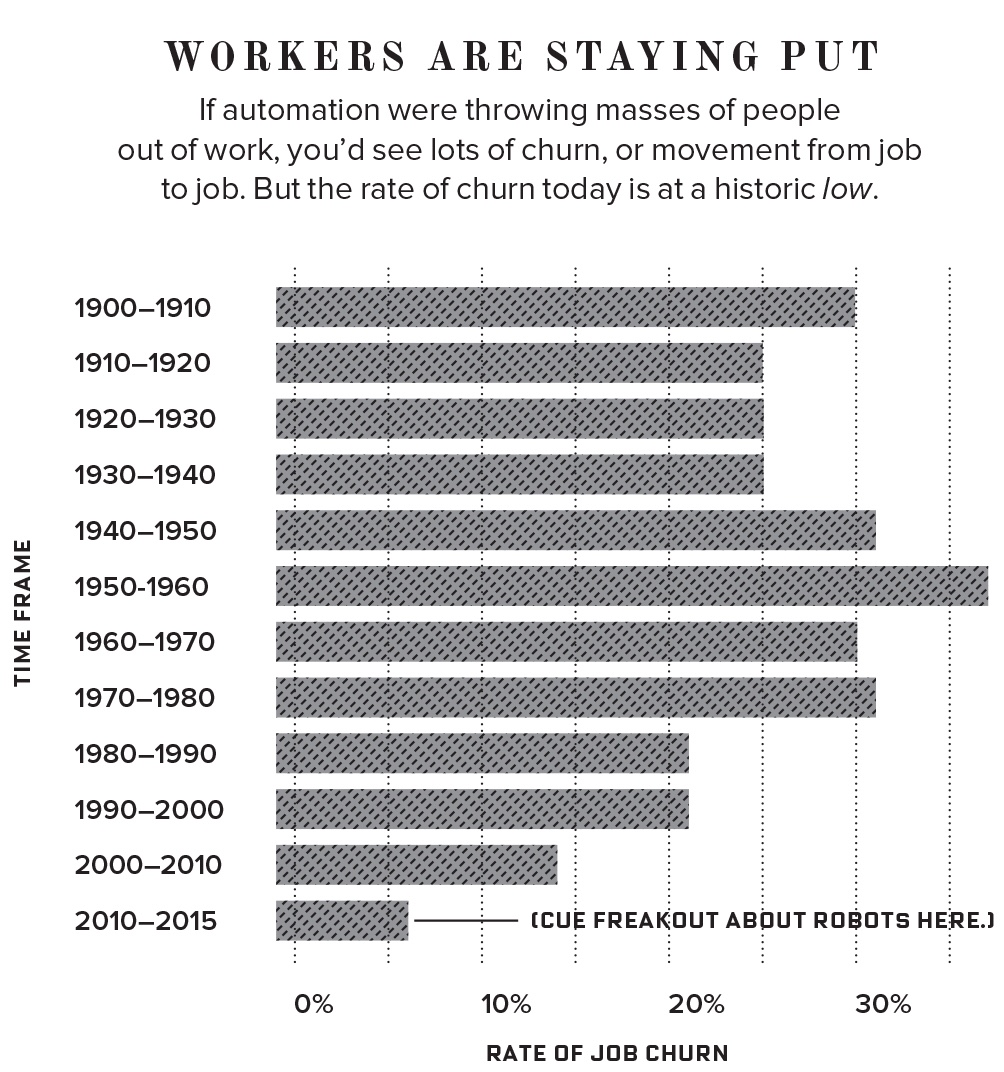

If automation were truly remaking the job market, you’d also expect to see a lot of what economists call job churn as people move from company to company and industry to industry after their jobs have been destroyed. But we’re seeing the opposite of that. According to a recent paper by Robert Atkinson and John Wu of the Information Technology and Innovation Foundation, “Levels of occupational churn in the United States are now at historic lows.” The amount of churn since 2000—an era that saw the mainstreaming of the internet and the advent of AI—has been just 38 percent of the level of churn between 1950 and 2000. And this squares with the statistics on median US job tenure, which has lengthened, not shortened, since 2000. In other words, rather than a period of enormous disruption, this has been one of surprising stability for much of the American workforce. Median job tenure today is actually similar to what it was in the 1950s—the era we think of as the pinnacle of job stability.

NONE OF THIS is to say that automation and AI aren’t having an important impact on the economy. But that impact is far more nuanced and limited than the doomsday forecasts suggest. A rigorous study of the impact of robots in manufacturing, agriculture, and utilities across 17 countries, for instance, found that robots did reduce the hours of lower-skilled workers—but they didn’t decrease the total hours worked by humans, and they actually boosted wages. In other words, automation may affect the kind of work humans do, but at the moment, it’s hard to see that it’s leading to a world without work. McAfee, in fact, says of his earlier public statements, “If I had to do it over again, I would put more emphasis on the way technology leads to structural changes in the economy, and less on jobs, jobs, jobs. The central phenomenon is not net job loss. It’s the shift in the kinds of jobs that are available.”

McAfee points to both retail and transportation as areas where automation is likely to have a major impact. Yet even in those industries, the job-loss numbers are less scary than many headlines suggest. Goldman Sachs just released a report predicting that autonomous cars could ultimately eat away 300,000 driving jobs a year. But that won’t happen, the firm argues, for another 25 years, which is more than enough time for the economy to adapt. A recent study by the Organization for Economic Cooperation and Development, meanwhile, predicts that 9 percent of jobs across 21 different countries are under serious threat from automation. That’s a significant number, but not an apocalyptic one.

Granted, there are much scarier forecasts out there, like that University of Oxford study. But on closer examination, those predictions tend to assume that if a job can be automated, it will be fully automated soon—which overestimates both the pace and the completeness of how automation actually gets adopted in the wild. History suggests that the process is much more uneven than that. The ATM, for example, is a textbook example of a machine that was designed to replace human labor. First introduced around 1970, ATMs hit widespread adoption in the late 1990s. Today, there are more than 400,000 ATMs in the US. But, as economist James Bessen has shown, the number of bank tellers actually rose between 2000 and 2010. That’s because even though the average number of tellers per branch fell, ATMs made it cheaper to open branches, so banks opened more of them. True, the Department of Labor does now predict that the number of tellers will decline by 8 percent over the next decade. But that’s 8 percent—not 50 percent. And it’s 45 years after the robot that was supposed to replace them made its debut. (Taking a wider view, Bessen found that of the 271 occupations listed on the 1950 census only one—elevator operator—had been rendered obsolete by automation by 2010.)

Of course, if automation is happening much faster today than it did in the past, then historical statistics about simple machines like the ATM would be of limited use in predicting the future. Ray Kurzweil’s book The Singularity Is Near (which, by the way, came out 12 years ago) describes the moment when a technological society hits the “knee” of an exponential growth curve, setting off an explosion of mutually reinforcing new advances. Conventional wisdom in the tech industry says that’s where we are now—that, as futurist Peter Nowak puts it, “the pace of innovation is accelerating exponentially.” Here again, though, the economic evidence tells a different story. In fact, as a recent paper by Lawrence Mishel and Josh Bivens of the Economic Policy Institute puts it, “automation, broadly defined, has actually been slower over the last 10 years or so.” And lately, the pace of microchip advancement has started to lag behind the schedule dictated by Moore’s law.

Corporate America, for its part, certainly doesn’t seem to believe in the jobless future. If the rewards of automation were as immense as predicted, companies would be pouring money into new technology. But they’re not. Investments in software and IT grew more slowly over the past decade than the previous one. And capital investment, according to Mishel and Bivens, has grown more slowly since 2002 than in any other postwar period. That’s exactly the opposite of what you’d expect in a rapidly automating world. As for gadgets like Pepper, total spending on all robotics in the US was just $11.3 billion last year. That’s about a sixth of what Americans spend every year on their pets.

SO IF THE data doesn’t show any evidence that robots are taking over, why are so many people even outside Silicon Valley convinced it’s happening? In the US, at least, it’s partly due to the coincidence of two widely observed trends. Between 2000 and 2009, 6 million US manufacturing jobs disappeared, and wage growth across the economy stagnated. In that same period, industrial robots were becoming more widespread, the internet seemed to be transforming everything, and AI became really useful for the first time. So it seemed logical to connect these phenomena: Robots had killed the good-paying manufacturing job, and they were coming for the rest of us next.

But something else happened in the global economy right around 2000 as well: China entered the World Trade Organization and massively ramped up production. And it was this, not automation, that really devastated American manufacturing. A recent paper by the economists Daron Acemoglu and Pascual Restrepo—titled, fittingly, “Robots and Jobs”—got a lot of attention for its claim that industrial automation has been responsible for the loss of up to 670,000 jobs since 1990. But just in the period between 1999 and 2011, trade with China was responsible for the loss of 2.4 million jobs: almost four times as many. “If you want to know what happened to manufacturing after 2000, the answer is very clearly not automation, it’s China,” Dean Baker says. “We’ve been running massive trade deficits, driven mainly by manufacturing, and we’ve seen a precipitous plunge in the number of manufacturing jobs. To say those two things aren’t correlated is nuts.” (In other words, Donald Trump isn’t entirely wrong about what’s happened to American factory jobs.)

Nevertheless, automation will indeed destroy many current jobs in the coming decades. As McAfee says, “When it comes to things like AI, machine learning, and self-driving cars and trucks, it’s still early. Their real impact won’t be felt for years yet.” What’s not obvious, though, is whether the impact of these innovations on the job market will be much bigger than the massive impact of technological improvements in the past. The outsourcing of work to machines is not, after all, new—it’s the dominant motif of the past 200 years of economic history, from the cotton gin to the washing machine to the car. Over and over again, as vast numbers of jobs have been destroyed, others have been created. And over and over, we’ve been terrible at envisioning what kinds of new jobs people would end up doing.

Even our fears about automation and computerization aren’t new; they closely echo the anxieties of the late 1950s and early 1960s. Observers then too were convinced that automation would lead to permanent unemployment. The Ad Hoc Committee on the Triple Revolution—a group of scientists and thinkers concerned about the impact of what was then called cybernation—argued that “the capability of machines is rising more rapidly than the capacity of many human beings to keep pace.” Cybernation “has broken the link between jobs and income, exiling from the economy an ever-widening pool of men and women,” wrote W. H. Ferry, of the Center for the Study of Democratic Institutions, in 1965. Change “cybernation” to “automation” or “AI,” and all that could have been written today.

THE PECULIAR THING about this historical moment is that we’re afraid of two contradictory futures at once. On the one hand, we’re told that robots are coming for our jobs and that their superior productivity will transform industry after industry. If that happens, economic growth will soar and society as a whole will be vastly richer than it is today. But at the same time, we’re told that we’re in an era of secular stagnation, stuck with an economy that’s doomed to slow growth and stagnant wages. In this world, we need to worry about how we’re going to support an aging population and pay for rising health costs, because we’re not going to be much richer in the future than we are today. Both of these futures are possible. But they can’t both come true. Fretting about both the rise of the robots and about secular stagnation doesn’t make any sense. Yet that’s precisely what many intelligent people are doing.

The irony of our anxiety about automation is that if the predictions about a robot-dominated future were to come true, a lot of our other economic concerns would vanish. A recent study by Accenture, for instance, suggests that the implementation of AI, broadly defined, could lift annual GDP growth in the US by two points (to 4.6 percent). A growth rate like that would make it easy to deal with the cost of things like Social Security and Medicare and the rising price of health care. It would lead to broader wage growth. And while it would complicate the issue of how to divide the economic pie, it’s always easier to divide a growing pie than a shrinking one.

Alas, the future this study envisions seems to be very far off. To be sure, the fact that fears about automation have been proved false in the past doesn’t mean they will continue to be so in the future, and all of those long-foretold positive feedback loops exponential growth may abruptly kick in someday. But it isn’t easy to see how we’ll get there from here anytime soon, given how little companies are investing in new technology and how slowly the economy is growing. In that sense, the problem we’re facing isn’t that the robots are coming. It’s that they aren’t.

Source: https://www.wired.com/2017/08/robots-will-not-take-your-job/?mbid=social_fb

Leave a comment